NVIDIA has launched Nemotron-3, which marks an important signal in the evolution of enterprise AI systems.

NVIDIA frames Nemotron-3 as infrastructure for agentic AI, systems capable of planning tasks, reasoning across complex workflows, and interacting with enterprise tools autonomously.

The announcement reflects a broader shift across the AI ecosystem. Models are no longer being optimized purely for chat or content generation.

Increasingly, they are being designed for environments where AI agents collaborate, retrieve information, verify outputs, and execute actions within software systems.

From Generative AI to Agentic Systems

Earlier AI Technology Insight’s coverage of NVIDIA GTC 2026 highlighted how NVIDIA is pushing toward a full-stack AI ecosystem, combining accelerated computing, foundation models, and enterprise AI infrastructure.

The conference themes emphasized agentic AI, AI factories, and open model ecosystems as the next phase of enterprise adoption.

Nemotron-3 fits directly into that broader strategy. Rather than treating models as standalone tools, NVIDIA appears to be designing them as components within a larger architecture of autonomous AI systems operating across enterprise environments.

Generative AI achieved rapid enterprise adoption because the interaction model was simple. A user provides a prompt. The model generates a response.

Most enterprise work does not behave this way.

Tasks such as software debugging, financial analysis, supply-chain planning, or cybersecurity investigation involve multiple stages. Data retrieval. Hypothesis testing. Verification. Iterative reasoning. Often across multiple information sources.

These workflows increasingly require AI agents capable of executing multi-step processes rather than generating isolated outputs.

According to Harvard Business Review, agentic AI refers to systems that can pursue goals autonomously within a digital environment. They observe data, plan actions, interact with tools, and adapt decisions based on intermediate results.

The potential economic impact is significant. McKinsey research suggests generative AI and autonomous systems could create $2.6 trillion to $4.4 trillion in annual value across industries by improving productivity and automating knowledge work.

Yet the architecture required to support these systems differs substantially from conventional language models.

Nemotron-3 appears to be designed specifically for that emerging environment.

Nemotron-3 Is Designed for Reasoning Workloads

Traditional large language models (LLMs) rely primarily on transformer architectures optimized for next-token prediction. They work well for generating text but become inefficient when models must reason across extremely large contexts or execute complex reasoning chains.

Nemotron-3 introduces architectural changes intended to address these limitations.

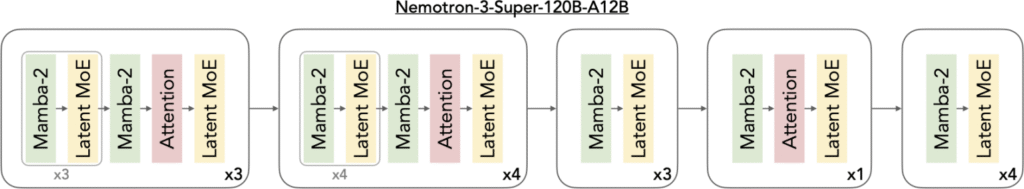

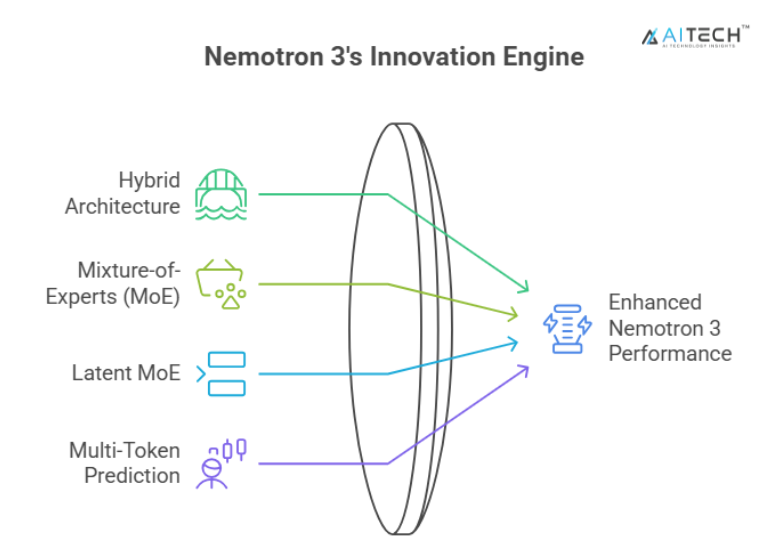

The model family combines Mixture-of-Experts architecture with hybrid Mamba-Transformer sequence modeling, improving throughput while allowing the system to process extremely large contexts.

Source: NVIDIA Technical blog

The model family combines Mixture-of-Experts architecture with hybrid Mamba-Transformer sequence modeling, improving throughput while allowing the system to process extremely large contexts.

Mamba-style state space models are designed to handle long sequences more efficiently than traditional transformers, reducing memory overhead and improving speed when reasoning across large context windows.

Context capacity can extend toward one million tokens, allowing models to analyze significantly larger information environments than earlier LLM architectures.

For agentic AI systems, context length is critical.

Agents frequently need to process entire document repositories, enterprise knowledge bases, or software codebases. When context windows are limited, reasoning chains fragment, and system performance declines.

Extended context windows allow agents to maintain situational awareness throughout complex workflows.

Efficiency is equally important.

Agentic systems generate far more intermediate tokens than conversational AI applications. Each reasoning step adds computational cost. At enterprise scale, these costs become operational constraints.

Mixture-of-Experts architectures mitigate this issue by activating only a portion of model parameters during inference, improving efficiency without sacrificing capability. It therefore reflects a design philosophy centered on reasoning efficiency rather than raw model size.

NVIDIA also points to early benchmark results demonstrating how the model performs in agent-driven research workflows. Nemotron-3 powers NVIDIA ‘s AI-Q research agent, which ranked No. 1 on the DeepResearch Bench and DeepResearch Bench II leaderboards.

These benchmarks evaluate how well AI systems conduct multi-step research tasks across large document collections while maintaining coherent reasoning chains.

The results suggest the model is optimized not only for text generation but for extended analytical workflows typical of agentic systems.

Multi-Agent Systems Are the Real Target

Nemotron-3 is not optimized for a single AI agent performing isolated tasks.

The architecture anticipates environments where multiple agents collaborate within a workflow.

One agent might retrieve documents from enterprise databases. Another analyzes the information. A third agent validates results or executes actions through APIs.

NVIDIA explicitly describes Nemotron-3 as a model family designed to support multi-agent reasoning systems that plan, retrieve, verify, and execute tasks across long workflows.

Research into enterprise AI systems increasingly supports this approach.

Studies examining compound AI systems show that performance often depends less on the intelligence of individual models and more on how effectively agents coordinate tasks, share memory, and verify results.

In many experimental deployments, multi-agent systems outperform single-model architectures when solving complex enterprise problems.

This shift changes how organizations design AI infrastructure.

The focus moves toward orchestration frameworks, memory layers, retrieval systems, and tool integration rather than individual model performance alone.

Nemotron-3 reflects this systems-level perspective.

NVIDIA’s Strategic Expansion Beyond Hardware

Nemotron-3 also highlights NVIDIA’s evolving role within the AI ecosystem.

The company built its dominance through GPU hardware, which remains the foundation of modern AI training and inference infrastructure. However, the market is increasingly shifting toward vertically integrated platforms.

Hardware alone is no longer sufficient.

Organizations building production AI systems require a complete stack that includes:

- Compute infrastructure

- Foundation models

- Training frameworks

- Deployment tools

- Agent orchestration environments

NVIDIA has gradually expanded across these layers.

The Nemotron models are released with open weights and training resources, allowing developers to fine-tune them for domain-specific applications. This approach encourages experimentation while reinforcing NVIDIA’s broader platform ecosystem.

If developers adopt Nemotron-based models widely, it strengthens NVIDIA’s position across the entire AI stack.

In effect, the company is evolving from a semiconductor manufacturer into a full-stack AI infrastructure provider.

The Economics of Agentic AI

Economic constraints remain one of the largest barriers to widespread adoption of agentic systems.

Agents do not simply generate answers. They generate reasoning.

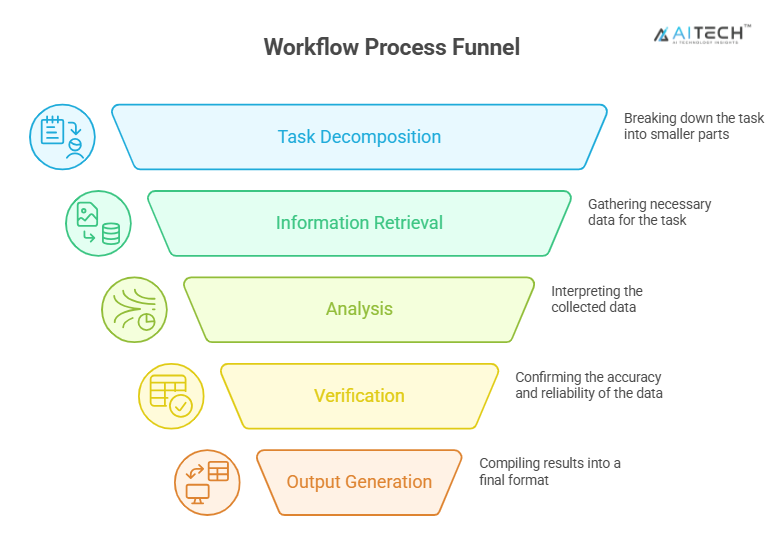

A typical workflow may involve multiple stages:

- Task decomposition

- Information retrieval

- Analysis

- Verification

- Output generation

Each stage consumes inference cycles and generates tokens. This makes efficiency improvements critical.

Many organizations are still experimenting with pilot deployments, and scaling agentic systems requires significant infrastructure investment.

Models such as Nemotron-3 aim to reduce these costs by improving reasoning efficiency and throughput.

Governance and Security Challenges

The rise of agentic AI also introduces new governance challenges.

Traditional AI systems generate recommendations. Humans remain responsible for decisions.

Autonomous agents may take actions directly within enterprise environments.

This introduces risks.

Researchers studying enterprise AI systems have identified vulnerabilities, NVIDIA including cascading action chains, unintended tool execution, and complex interactions between multiple agents.

These risks require stronger governance frameworks.

Organizations deploying agentic AI will need enhanced monitoring, explainability tools, and security controls to ensure that autonomous systems operate safely within enterprise infrastructure.

Without these safeguards, large-scale deployment becomes difficult.

The Industry Is Early in the Agentic Cycle

Despite growing investment, agentic AI remains in an early development phase.

Analysts estimate that many early projects will fail due to unclear business value, infrastructure limitations, or governance challenges.

This pattern is typical for emerging technologies.

Initial experimentation generates enthusiasm. Early deployments reveal operational constraints. Eventually, more mature architectures emerge.

Nemotron-3 should therefore be viewed as a directional signal rather than a final solution.

It demonstrates how model architecture is beginning to evolve in response to the demands of autonomous AI systems.

Strategic Implications for Enterprise AI Leaders

For CIOs and AI decision-makers, the most important takeaway is not about a single model release. It is about architecture.

Enterprise AI is shifting from isolated applications toward distributed systems of cooperating AI agents embedded throughout organizational workflows.

Supporting this shift requires new infrastructure.

Organizations must invest in orchestration frameworks, scalable inference environments, governance mechanisms, and observability systems.

Models like Nemotron-3 provide one layer of this emerging stack.

But the real transformation lies in how these models are integrated into enterprise technology ecosystems.

Conclusion

NVIDIA Nemotron-3 represents a meaningful step in the evolution of enterprise AI systems.

The model family reflects a broader transition from generative interfaces toward autonomous, agent-driven architectures capable of executing complex workflows.

Agentic AI promises substantial productivity gains, but it also introduces new technical and governance challenges.

Nemotron-3 does not resolve those challenges entirely.

What it does reveal is the direction in which AI infrastructure is moving. Models are increasingly being designed not for conversation, but for autonomous reasoning within distributed systems.

For enterprise leaders, the strategic question is no longer whether agentic AI will emerge.

The real question is how quickly organizations can build the infrastructure required to support it.

FAQs

1. What is NVIDIA Nemotron-3?

NVIDIA Nemotron-3 is an open AI model family designed for agentic AI systems that perform multi-step reasoning, tool interaction, and autonomous task execution.

2. What is agentic AI in enterprise technology?

Agentic AI refers to AI systems that autonomously plan tasks, interact with tools, and execute workflows to achieve defined goals.

3. How is Nemotron-3 different from traditional LLMs?

Nemotron-3 is optimized for long-context reasoning and multi-agent workflows, rather than only conversational text generation.

4. What enterprise problems can agentic AI solve?

Agentic AI can automate research analysis, software debugging, IT operations monitoring, and complex data workflows.

5. Why is NVIDIA investing in agentic AI models?

NVIDIA is developing models like Nemotron-3 to support autonomous AI systems running on its accelerated computing infrastructure.

Discover the future of AI, one insight at a time – stay informed, stay ahead with AI Tech Insights.

To share your insights, please write to us at info@intentamplify.com