Luma AI is pushing generative video in a direction that addresses one of the technology’s most persistent challenges.

While AI models have become increasingly capable of creating visual scenes from text prompts, reproducing convincing human motion and emotional nuance entirely through synthetic generation remains difficult.

Most text-to-video systems attempt to construct everything at once. Characters, movement, lighting, and environments are all generated from a single prompt.

Although the results can be visually striking, maintaining stable and believable human motion across longer sequences often exposes the limitations of these models.

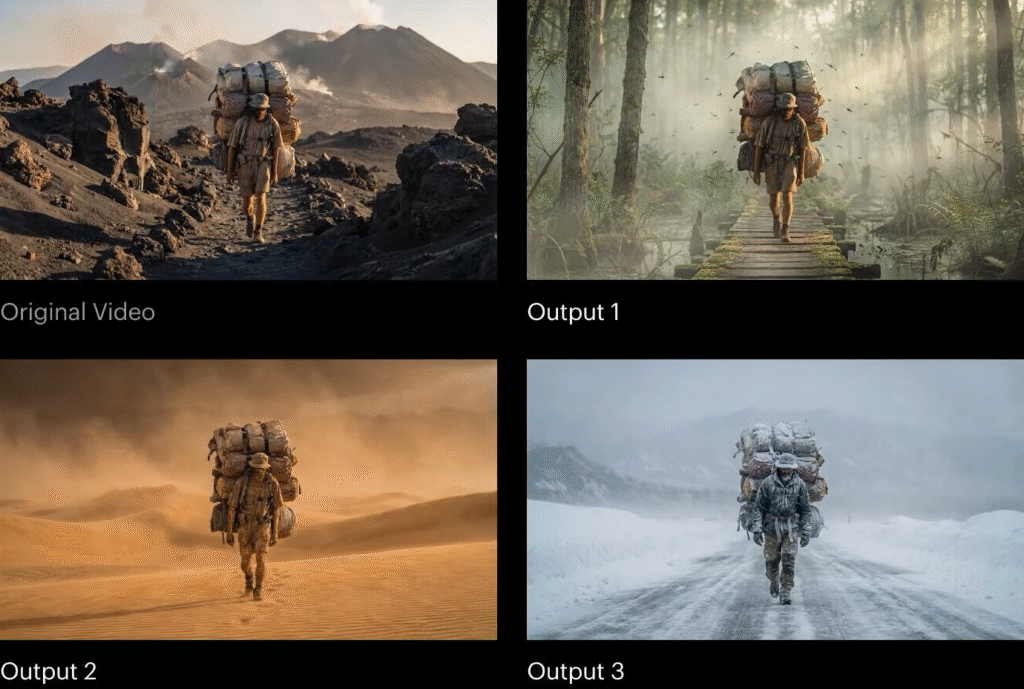

Rather than generating an entire scene from scratch, the system begins with real footage of a human performance. AI is then used to reinterpret the surrounding environment, costumes, and visual context while preserving the actor’s original movement, timing, and expression.

This approach signals a meaningful shift in the development of generative video systems. Instead of attempting to simulate human performance entirely, AI becomes a tool that enhances and transforms real captured motion.

A Hybrid Model for AI Video Generation

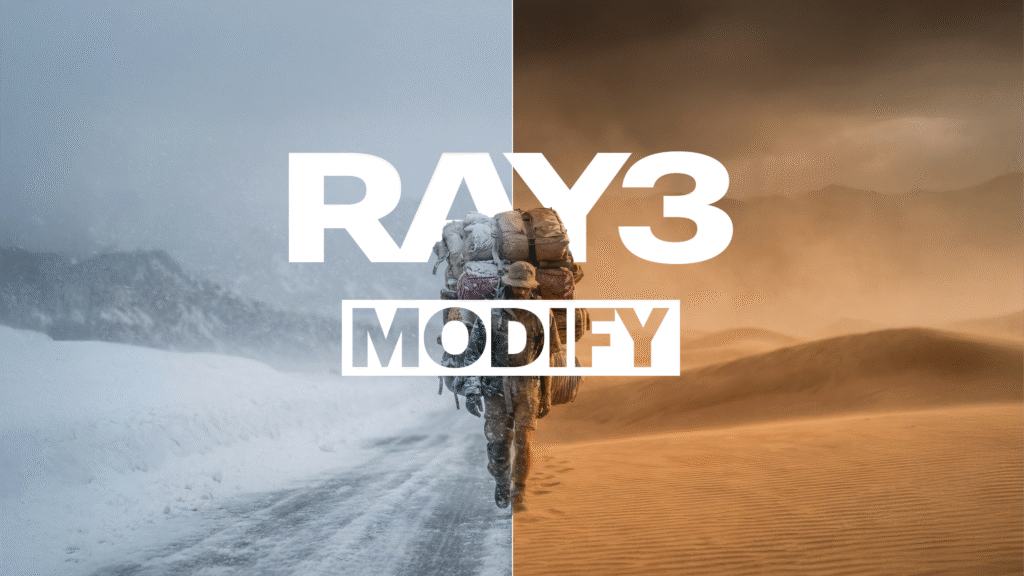

Luma’s Ray3 Modify model effectively separates the two components of video creation.

Source: Luma AI

Human performance remains fixed, captured through real video footage. The AI then reconstructs or transforms the surrounding environment.

This hybrid workflow solves one of the most complex problems in generative video. Human motion synthesis.

By preserving the original performance, the model maintains natural eye movement, body balance, and timing while allowing the AI to reinterpret the scene visually.

For filmmakers and creators, this creates a flexible production model. A single performance can be transformed into multiple visual worlds without reshooting the scene.

Start and End Frame Control

Another important feature introduced by Luma’s model is start and end frame conditioning.

Creators provide two visual frames representing the beginning and the end of a scene. The AI then generates the motion between those two states.

Source: Luma AI

This technique introduces constraints into the generative process, which significantly improves continuity.

Instead of relying entirely on open prompts, the model operates within defined visual boundaries. Camera movement, character behavior, and environmental transitions remain consistent with the supplied frames.

For production teams, this adds an important layer of creative control to generative video workflows.

Why This Approach Matters

The architecture behind Luma’s system reflects a broader shift happening across the AI ecosystem.

Rather than replacing real-world data, many emerging AI systems are designed to augment human input with generative capabilities.

A similar perspective on the evolving role of video infrastructure also emerged during discussions at Mobile World Congress 2026. As highlighted by Marketing Technology Insights, industry leaders increasingly view video not simply as a media format but as a shared systems challenge spanning telecom networks, AI platforms, cloud infrastructure, and device ecosystems.

In video production, human performance becomes the stable foundation. AI becomes the adaptive layer that reshapes the surrounding environment.

This hybrid approach allows generative AI to integrate more effectively into professional production pipelines.

A Subtle Shift in AI Infrastructure

The evolution of generative video systems like Luma’s also reflects a broader shift occurring across the AI technology stack.

In recent industry discussions, particularly across telecom and semiconductor ecosystems, increasing attention has been placed on distributed AI architectures.

These systems move certain forms of intelligence closer to where data is captured, such as cameras, devices, and network edges, rather than processing everything exclusively in centralized cloud environments.

“This current decline in the visual arts, and Hollywood production, […] has to stop,” the CEO of Luma AI, Amit Jain said. “It stops by people trying out new ideas, and AI is the only way.”

This architectural direction suggests a future where visual data can be interpreted and enriched closer to the source before entering larger AI pipelines.

Seen through this lens, Luma’s approach highlights an interesting pattern in how AI workflows may evolve. The model begins with real captured footage, preserves the human performance, and then applies generative transformations to the surrounding scene.

While designed primarily for creative production, this type of workflow echoes a broader technological idea. Data is captured in the physical world, interpreted through intelligent systems, and then enhanced through generative models.

A Digital Stage for AI Storytelling

One way to understand this evolution is through the metaphor of theatre.

In theatre, actors perform on a stage while the environment around them changes through lighting, scenery, and effects.

Generative video AI is beginning to operate in a similar way.

The performance remains constant. The environment becomes programmable.

Tools like Luma’s Ray3 Modify effectively turn recorded footage into a digital stage, where AI can reinterpret the surrounding world without altering the actor’s performance.

What This Signals for the Future of AI Media

The convergence of generative AI and edge computing suggests that the next phase of video production will not be defined solely by better models.

It will depend on how AI integrates with the broader technology stack.

Edge processors will capture and interpret motion. Networks will distribute AI workloads. Generative models will reshape visual environments.

Together, these systems could transform how visual content is created, edited, and distributed.

Luma’s model offers an early glimpse of that future. A production workflow where human performance anchors the narrative, while AI dynamically constructs the world around it.

FAQs

1. What is performance-preserving generative video AI?

Performance-preserving generative video AI is a type of model that uses real filmed human motion as the base layer and then applies AI to modify the surrounding environment, visual style, or scene elements.

2. How is generative video AI changing media production workflows?

Generative video AI is allowing production teams to capture a performance once and generate multiple visual environments afterward. This reduces the need for physical sets, location shoots, and complex visual effects pipelines while enabling faster creative iteration.

3. Why is human motion still difficult for AI video models to generate?

Human motion involves subtle dynamics such as balance, eye movement, timing, and micro-expressions. Generative models can create frames individually, but maintaining realistic movement across long sequences requires complex motion understanding and temporal consistency.

4. What industries could benefit most from generative video AI?

Beyond filmmaking, generative video AI has potential applications in advertising, gaming, enterprise training, marketing content production, and immersive digital experiences. Organizations can use these tools to scale visual content while reducing production costs.

5. How might AI infrastructure influence the future of generative video?

Advances in AI infrastructure, including distributed computing and edge processing, may enable faster video analysis and generation closer to where content is captured. This could support real-time media creation workflows and new interactive visual experiences.

Discover the future of AI, one insight at a time – stay informed, stay ahead with AI Tech Insights.

To share your insights, please write to us at info@intentamplify.com