In most companies, artificial intelligence did not enter through strategy. It entered through performance. A model reduced churn, improved fraud detection, accelerated underwriting, and shortened service queues. Leadership approved the expansion because the numbers worked.

Now the same systems are forcing governance conversations that the organization never planned to have. The shift is an operational risk.

Regulation Turned AI Into a Managed Function

The EU AI Act, formally adopted in 2024, reframed AI from a productivity tool into a regulated corporate function. High-risk systems. Hiring models. Credit scoring. Infrastructure monitoring.

All now require documented oversight, auditability, and human accountability. Not optional. Companies serving European customers must demonstrate explainability and risk controls before deployment, not after a failure.

US regulators have not issued a single unified law, but the direction is consistent. The U.S. Federal Trade Commission warned in 2024 that companies remain legally responsible for harms caused by automated decision systems, including third-party models embedded inside products.

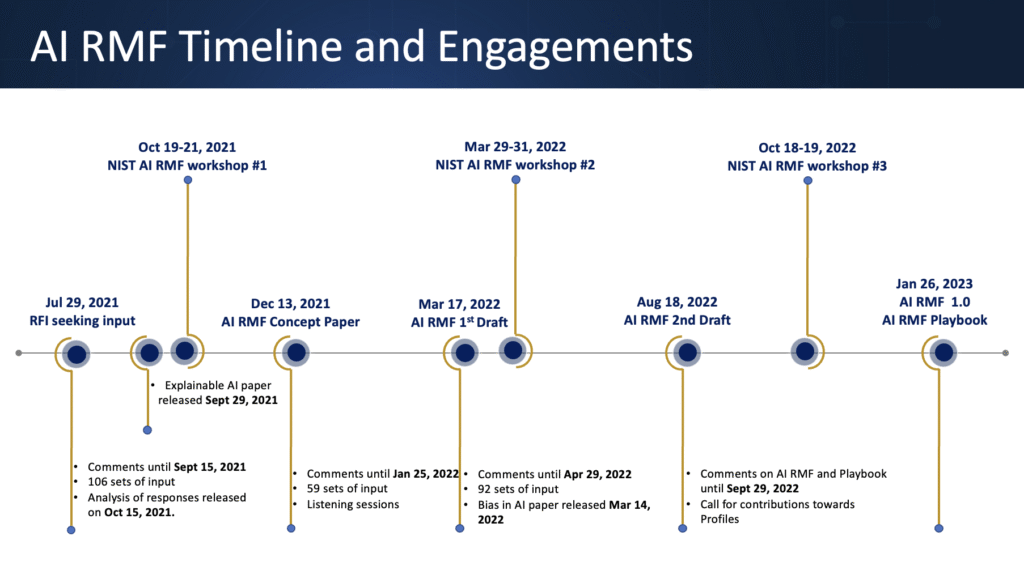

In parallel, the National Institute of Standards and Technology updated its AI Risk Management Framework guidance, emphasizing continuous monitoring, documentation, and governance rather than one-time validation.

The implication is simple. If a model makes a decision, the company owns the decision.

When AI Stops Advising and Starts Acting

That distinction matters because AI is no longer advisory.

Banks increasingly allow models to block transactions in real time. Insurance carriers automatically adjust premiums using behavioral signals. HR platforms rank applicants before a human reads a résumé.

The Bank for International Settlements noted in 2024 supervisory analysis that financial institutions now deploy machine learning in processes where outputs directly trigger financial actions.

This crosses a line. The software is no longer assisting judgment. It is exercising it.

This introduces a new executive question. Not “does the model perform?” but “who is accountable when it performs correctly for the wrong reasons?”

The Governance Gap Inside Organizations

Many leaders still treat AI risk as a data science problem. It is not. It is a management control problem.

Large language models illustrate the gap. A system can meet accuracy benchmarks while still producing discriminatory outcomes, hallucinated financial explanations, or inconsistent reasoning under slightly different prompts.

The model behaves probabilistically while corporate accountability remains deterministic. Courts and regulators do not audit confidence intervals. They assign responsibility.

McKinsey’s 2024 global AI survey found that more than 40% of organizations using generative AI experienced at least one significant risk event, such as data leakage or incorrect automated outputs affecting operations. Fewer than one-third had lifecycle governance in place. Adoption outran oversight.

Accountability Versus Speed

Here is the uncomfortable trade-off. Tight governance slows deployment. Loose governance scales liability.

AI accountability programs, therefore, resemble financial internal controls. Model inventories. Approval workflows. Audit trails. Continuous monitoring for drift.

Documented human review points. Not because regulators asked, but because executives discovered a practical truth. When decisions are automated, undocumented behavior becomes enterprise risk.

There is also a structural issue. Many modern systems depend on foundation models that the enterprise did not build. Responsibility becomes asymmetric. Vendors provide capability. The deploying company provides accountability.

Contracts rarely transfer that obligation when outputs affect customers, employees, or financial transactions.

Why Boards Are Now Involved

AI has moved from analysis to action. That alone explains the urgency.

The organizations adapting fastest are not the ones with the most advanced models. They are the ones treating AI like any other decision-making infrastructure. Comparable to financial reporting systems or safety-critical engineering.

Controlled environments, traceable processes, named owners.

The technology conversation used to focus on intelligence. It now focuses on responsibility. And that shift will shape adoption more than any model breakthrough released this year.

FAQs

1) What does AI accountability actually mean for a company?

AI accountability means the organization is responsible for every automated decision its systems make, whether the model is built internally or supplied by a vendor.

2) Who inside an organization should own AI accountability?

Ownership is shared but anchored operationally. Technology teams manage model performance and monitoring, legal and compliance oversee regulatory exposure, and executive leadership is accountable for business impact.

3) Why are regulators concerned about AI decision-making now?

Automated hiring screening, transaction blocking, underwriting, and pricing decisions directly affect individuals and markets. Regulators are focusing on transparency, discrimination risk, consumer harm, and the inability to challenge opaque decisions.

4) What risks do companies face if they lack AI governance controls?

Primary risks include regulatory enforcement, litigation exposure, discriminatory outcomes, reputational damage, and operational disruption caused by incorrect automated actions. The financial risk is often indirect.

5) How can executives operationalize AI accountability without slowing innovation?

By treating AI like financial reporting infrastructure. Maintain a model inventory, require pre-deployment reviews for high-impact use cases, monitor outputs continuously, log decisions, and define human escalation paths.

Discover the future of AI, one insight at a time – stay informed, stay ahead with AI Tech Insights.

To share your insights, please write to us at info@intentamplify.com